What incrementality testing is

Incrementality testing, also called lift testing or holdout experiments, is straightforward: you stop spending on a channel or tactic for a portion of your audience and measure what happens to that group versus a control group that received normal exposure.

A geo-lift test works at the geographic level. You pick a city or region, pause your Google ads there for four weeks, and measure how many conversions you lose compared to a matched control area where you kept spending at normal levels. The difference is the incremental value of Google ads in that market. A holdout test works at the audience level. You create a small percentage of users (1-5%) who never see your retargeting ads. After several weeks, you compare their conversion rate to the audience that sees retargeting normally. The difference is the lift from retargeting.

The appeal is intuitive. You're not inferring anything. You're directly measuring the effect by deliberately withholding marketing and seeing what changes. It feels empirical.

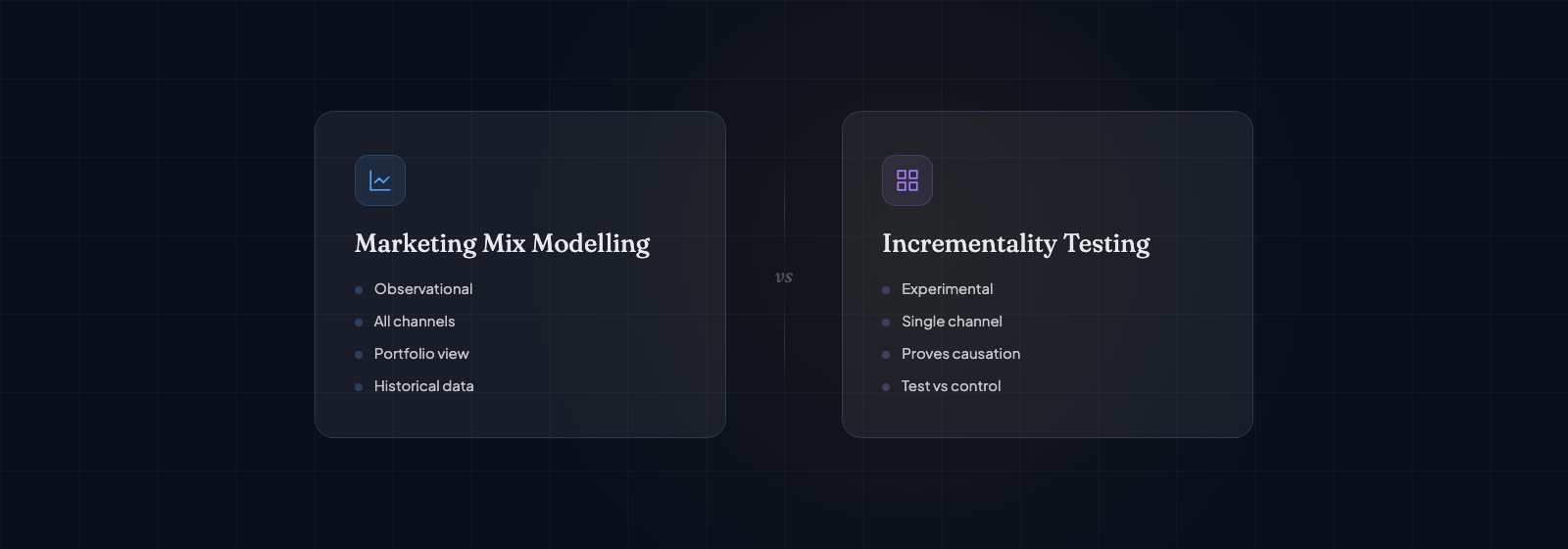

How incrementality differs from MMM fundamentally

The difference isn't academic—it changes everything about what each tool can and can't do.

Incrementality tests are answer-specific, MMM is framework-wide. An incrementality test tells you: "What is Google Ads driving right now?" It's a focused question with a focused answer. MMM tells you: "Given your current budget allocation across all channels and external factors, how much is each channel contributing?" That's a systems question. If you want to know the incremental value of one channel right now, incrementality testing is faster and more direct. If you want to optimize the allocation across all channels simultaneously, you need MMM.

Incrementality tests measure active campaigns, MMM measures all spending. You can only run an incrementality test on something people would notice the absence of. You can't run a holdout test on email sends (if subscribers don't get your email, they notice). You can't easily test organic search or brand awareness channels. MMM doesn't care. It measures whatever data you feed it, including channels that are hard to test in live experiments.

Incrementality is faster, MMM is historical. A well-designed geo-lift can give you results in 4-8 weeks. MMM needs historical data—typically 18-24 months to build reliably. If you have a new channel or new product, MMM can't tell you much. Incrementality can run a test immediately.

Incrementality measures short-term effects, MMM captures long-term dynamics. An incrementality test running for six weeks shows you the immediate conversion effect. But brand building, seasonal patterns, and cumulative wear-out effects play out over months. MMM's historical approach captures these longer-term patterns. A channel might show weak short-term incrementality but strong long-term effects on repeat purchase rate.

The worst mistake companies make is treating incrementality test results as permanent truth about a channel. An email test from March might show one lift, an email test from September might show a completely different lift. Context changes, list fatigue changes, competitive noise changes. Incrementality tests measure a moment in time. They're useful moments, but moments nonetheless.

Strengths and weaknesses of each approach

Incrementality testing works well for:

- Getting a fast answer about a specific, new channel before MMM can build a view

- Measuring hard-to-isolate channels like social organic reach or word-of-mouth

- Validating assumptions when you need confidence quickly

- Testing tactical changes (creative refresh, audience change, frequency adjustment)

- Operating in regulated industries where historical modeling is harder

Incrementality testing struggles with:

- Testing expensive channels where pausing spend is costly (TV, out of home, brand sponsorships)

- Measuring interaction effects between channels (does email work better when Google ads are running?)

- Testing multiple channels in sequence (you can only pause one at a time, mostly)

- Accounting for seasonal variation (four weeks might be an abnormal period)

- Detecting small effects (if a channel's true lift is 2%, you need a huge test to find it reliably)

MMM works well for:

- Understanding the full picture of how all channels work together

- Detecting seasonal patterns and long-term trends

- Estimating channel elasticity (what happens if I shift 10% of spend?)

- Modeling expensive scenarios you can't test (what if we paused TV entirely?)

- Working with limited audience-level data (when privacy rules block detailed tracking)

MMM struggles with:

- Attribution overlap and collinearity (when spending on multiple channels rises and falls together)

- New channels or major tactic changes (the historical data doesn't apply)

- Tightly correlated external events (unusual weather, competitor action, viral moments)

- Small sample problems (rare channels, small budget channels)

- Explaining results to skeptical teams unfamiliar with statistical modeling

How they work together

This is where the real power lies. Companies that do this well use incrementality testing to calibrate MMM.

Here's the pattern: MMM runs on historical data and estimates the contribution of each channel. But MMM lives in a world of uncertainty. Multiple models could fit the data equally well but assign different values to channels. Your retargeting channel might get 15% credit in one model and 22% in another. Both fit the data.

Then you run an incrementality test. You pause email marketing for three weeks and measure the lift precisely. Maybe it's 4.2% conversion lift. Now you have a data point that breaks the tie. You know with high confidence what email drives. You feed that back into the MMM. The model recalibrates. It says, "If email drives 4.2%, then given the interactions and overlaps I see in the rest of the data, here's the best picture of other channels."

This is how incrementality testing and MMM complement rather than compete. Incrementality gives you precision on specific channels. MMM gives you the full picture. Incrementality tells you local truth. MMM tells you system truth.

Deciding which approach you need first

The decision matrix is practical. Start here:

If you have less than 12 months of data: Do incrementality testing. You have nothing to model yet. Get fast answers on your key channels while you build historical data.

If you're optimizing within a channel: Do incrementality testing. You want to know if creative refresh works, or if expanding an audience has incremental value. That's a test question, not a model question.

If you're reallocating budget across channels: Do MMM. You need to understand interactions and tradeoffs. Testing one channel at a time won't show you what happens when you move budget from channel A to channel B.

If you have strong uncertainty about a channel's value: Do incrementality testing first. It's faster to get an answer than to wait for MMM. Use the test result to inform the model.

If a channel is expensive to pause: Do MMM. You can't afford to run a six-week test with no spend. The model lets you estimate without the cost.

Building a measurement stack that uses both

The optimal state is neither MMM alone nor incrementality testing alone. It's a rotation where they reinforce each other.

Year one: Build 18 months of data, run two incrementality tests on your largest channels. The tests give you calibration points. They also give you understanding that you'll use to design the first MMM.

Year two: Run your first full MMM now that you have historical data and benchmark tests. Use the test results to validate the model. Where they disagree, investigate why. Then run one fresh incrementality test on a secondary channel the model identifies as uncertain.

Year three and beyond: Refresh MMM quarterly or semi-annually as new data arrives. Run incrementality tests on tactical questions (creative changes, audience experiments, new channels) while the model handles strategic questions (budget reallocation, seasonal planning, scenario modeling).

The pattern is: use MMM to understand your system, use incremental testing to validate specific claims, use test results to improve the model. None of them is a complete solution. Together, they give you confidence in your decisions.