The upper-funnel justification problem

Every brand marketer has been in the meeting. The CFO asks why you're spending on TV or sponsorship when the performance team can show clear CPAs on paid search. A TV campaign costs half a million pounds and you can't prove a single sale came from it. Search delivers measurable conversions. The logic seems obvious: cut TV, spend more on search.

The answer is that search captures demand that brand activity creates. But proving it is hard.

Last-click attribution tells you nothing about this. It gives all the credit to the final touchpoint. If someone sees your TV ad, forgets about you, then searches your brand name three weeks later and converts, the search channel gets 100% of the credit. TV gets zero. This systematically undervalues awareness channels and overvalues conversion channels. It's why CMOs feel perpetually on the defensive.

The problem isn't that upper-funnel channels don't work. It's that your measurement system is biased against them.

What last-click attribution misses

Think of a football match. The striker scores the goal. Last-click attribution gives the striker all the credit. The midfielder who created the chance, the defender who won possession, the goalkeeper who started the counterattack—they get nothing.

This is absurd on a pitch. It's equally absurd in marketing. But it's what last-click attribution does systematically.

It creates a false hierarchy. Performance channels look superior because they're the last step before purchase. They capture the moment of conversion. Upper-funnel channels look weak because they come first—they create awareness and intent, but someone else gets the credit for the actual sale.

It hides dependencies. Search doesn't create demand from nothing. Most search volume comes from people who've already heard of you. If you cut your TV budget, search demand doesn't stay flat. It drops. You're measuring the wrong thing. You're measuring who was last, not what caused the outcome.

It's geometrically unfair to offline channels. How do you attribute a sale to radio or outdoor advertising? You can't track individual users. So most brands either ignore offline channels entirely or use vague estimates. "Brand awareness probably contributed 20%." This guess has no basis in data. But it's better than admitting you don't know, so upper-funnel budgets become the first thing to cut when money gets tight.

The result: brands systematically underinvest in upper funnel, overinvest in lower funnel, and wonder why their CAC keeps rising.

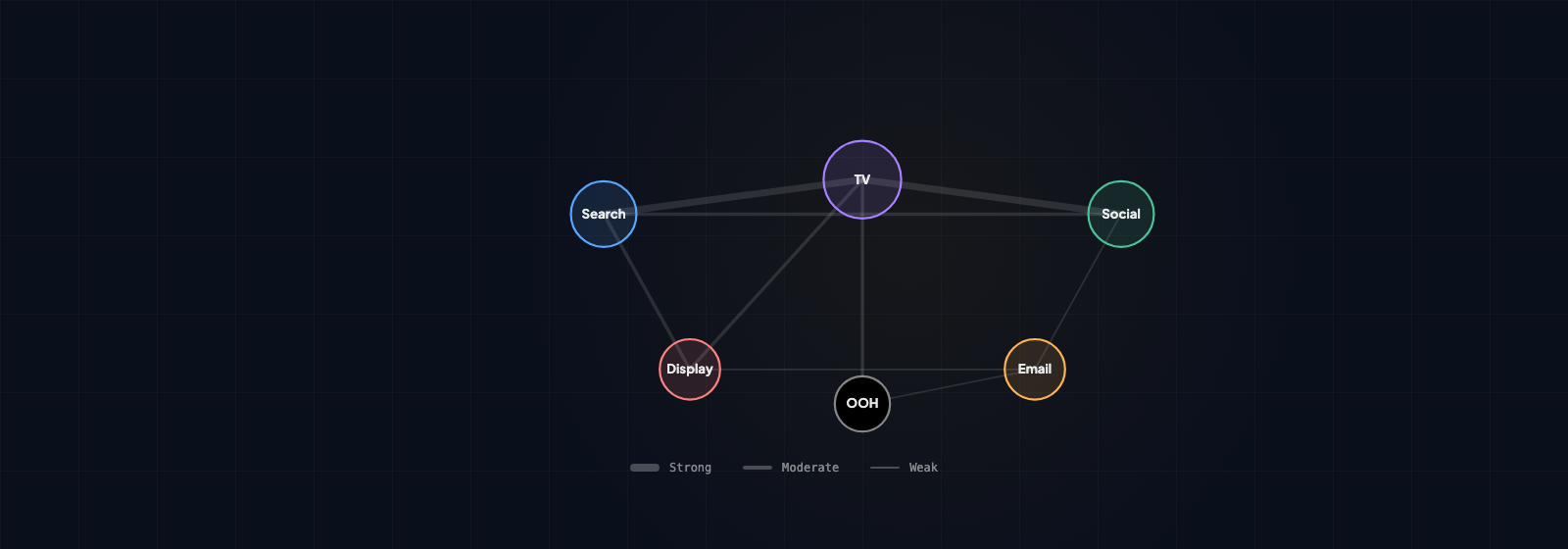

How MMM captures channel interactions

Marketing Mix Modelling approaches this differently. Instead of tracking individual user journeys, it models the relationship between all marketing inputs and business outcomes simultaneously. Weekly spend across TV, search, social, sponsorship, email, everything. Weekly sales or revenue. What patterns emerge over time?

The model doesn't care about last-click. It asks a different question: when all channels run together, what's the total effect compared to when some are paused? This reveals the real contribution of each channel, including their interactions.

Synergy effects become visible. TV alone delivers a 2.5x ROI. Social alone delivers 3.2x. But when TV and social run simultaneously, the combined effect is 4.1x—higher than the sum of the parts. Why? Because TV-driven awareness increases social ad click-through rates. People are more likely to engage with your social content if they've already seen your TV campaign.

This interaction effect is invisible in platform reporting. Each channel claims its own ROI. You see them as independent. MMM reveals that they're not. They amplify each other.

Halo effects are quantified. When you run sponsorship, awareness doesn't just help sponsorship. It improves performance across all digital channels. Search gets better CTRs. Display gets better engagement. Email gets better open rates. The halo effect can be worth more than the direct impact of the sponsorship itself. But you can't see this in channel reporting—you only see the channels individually.

Demand elasticity becomes clear. If you increase TV spend by 20%, sales don't increase by 20%. There's a curve. The first pound of TV spend delivers higher returns than the hundredth pound. This is critical for budget allocation. It tells you where you're getting diminishing returns. Attribution can't show you this. It just distributes credit across channels proportionally.

What synergy effects look like in practice

Here's a real example. A CPG brand runs MMM and discovers these individual channel ROIs:

- TV: 2.5x ROI

- Social: 3.2x ROI

- Search: 2.1x ROI

- Email: 5.8x ROI

Simple math suggests email is the clear winner. Spend everything on email.

But the synergy analysis reveals that when TV runs, the effectiveness of all digital channels increases. The attribution analysis shows:

- TV alone: 2.5x

- TV + Social interaction: +0.6x (12% lift to the combined effect)

- TV + Search interaction: +0.4x (8% lift to the combined effect)

- TV + Email interaction: +0.3x (5% lift to the combined effect)

The TV synergy effects with other channels total 1.3x on top of TV's direct 2.5x. That's an additional 50% of value you'd completely miss if you looked at channels individually.

Now the reallocation decision changes. You don't cut TV to maximum email. You keep TV running because it's amplifying everything else.

Defending brand spend with data

This is the power of MMM for a brand marketer. You can walk into the CFO's office with a decomposition chart.

Here's what base sales look like without marketing. This is the underlying demand in your market. It accounts for seasonality, pricing, distribution, competitor activity. It's what you'd sell even if you didn't spend a pound on marketing.

Here's what each channel adds. Search adds £2.1m in incremental revenue annually. TV adds £1.8m. Social adds £0.6m. Email adds £1.2m. These are actual lifts, isolated from confounding factors.

Here's the interaction effect. When these channels run together, there's an additional £0.9m in revenue created by the synergies between them. This is pure value that only exists because upper funnel and lower funnel work together.

This turns the conversation from qualitative to quantitative. The CFO can't argue with the logic anymore. You're not saying "brand awareness is important." You're saying "TV creates £1.8m in direct revenue and amplifies another £0.3m through synergies."

It's the difference between defending brand spend and proving its value.

In our experience, brands that cut upper-funnel activity based on last-click attribution see performance channel costs increase within 3-6 months. The demand pipeline dries up. MMM helps you see this before it happens.

The honest limitations

MMM is good at detecting that channel interactions exist. It's less precise at quantifying them exactly. The synergy numbers should be used directionally, not to three decimal places.

Think of it this way: the question isn't usually "exactly how much is TV worth to three decimal places?" The question is "should we cut TV or not?" MMM answers that second question powerfully. It shows you the direction and magnitude of effects clearly enough to make strategic decisions.

The refresh challenge remains. Like all MMM, this needs to be updated regularly. A model built on 2025 data becomes less reliable in late 2026. Markets change. Consumer behaviour shifts. New channels emerge. The synergy patterns that were true last year might not hold this year. Quarterly or semi-annual refreshes are the norm for teams doing this right.

But a directional synergy model that's six months old is still more useful than last-click attribution that's completely blind to interactions.

Interaction effects are real. You don't need perfect precision to know that upper-funnel and lower-funnel channels work together. You just need evidence that they do, and enough clarity to defend the investment. MMM provides both.