The difference between effectiveness and efficiency

Before we talk about measuring marketing effectiveness, we need to clear up a common confusion. Effectiveness is not the same as efficiency.

Efficiency is about doing things cheaply. A low cost per click, a high email open rate, a high website conversion rate. These are useful metrics, but they're about optimising the mechanics of what you're doing. Efficiency is internal.

Effectiveness is about delivering business results. Did the marketing move the needle on revenue? Did it build the business? Did it create the outcomes you were trying to achieve? Effectiveness is external. It's about the impact on the business, not the quality of the execution.

A campaign might be highly efficient on every measure you look at. Cheap clicks, high engagement, strong conversion rates. But if it doesn't shift sales or grow customer value, it's not effective. Conversely, a campaign might have mediocre engagement metrics but still drive significant revenue growth.

Most companies get this backwards. They optimise obsessively for efficiency metrics because they're easy to measure and visible in the tools they use. Then they wonder why improving those metrics doesn't correlate with business growth.

The first rule of measuring marketing effectiveness: only measure what relates directly to business outcomes.

Which metrics matter

There are hundreds of marketing metrics. Most of them don't tell you whether your marketing is working.

Start with this hierarchy. At the top are business outcome metrics. These connect directly to revenue and profit. Below them are customer behaviour metrics that indicate purchasing intent. At the bottom are engagement metrics that show attention and interest.

Outcome metrics (this is where effectiveness lives)

- Revenue from new customers. This is the primary measure of marketing effectiveness for acquisition programmes. Not leads generated. Not pipeline. Actual revenue from customers who didn't exist before.

- Customer lifetime value. Revenue is only half the story. A customer acquired for £500 who stays for two years and generates £5,000 in lifetime value is far more valuable than one who generates £2,000 over five years. Marketing effectiveness isn't just about acquisition; it's about the quality of customers you bring in.

- Return on marketing spend. Revenue from marketing attributable to marketing spend. For every pound spent, how much comes back? This is the most direct measure of effectiveness.

- Customer retention and repeat purchase rates. This matters more than most marketers realise. Keeping existing customers is often more cost-effective than acquiring new ones. If your marketing attracts customers who never come back, you're not building a business.

- Market share and category penetration. In mature categories, effectiveness isn't always about acquiring new customers. It's about shifting share from competitors or growing the category itself.

Behaviour metrics (which often indicate effectiveness)

These are lagging indicators of sales impact. A spike in these metrics often predicts revenue changes weeks or months later.

- Website visits from target audiences

- Content downloads and deep engagement

- Shopping cart addition and product page visits

- Enquiry volume and quality

- Email click-through rates from promotional messages

Engagement metrics (least predictive of effectiveness)

These tell you whether people are paying attention. They're useful for optimisation, but don't confuse attention with effectiveness.

- Impressions, reach, and frequency

- Clicks and click-through rates

- Engagement rate, likes, comments, shares

- Email open rates

- Video views (without context)

The problem: engagement metrics are easy to move. You can get thousands of impressions, clicks, and shares without generating a single customer. And the tools you use (Google Analytics, Meta, email platforms) highlight these metrics because they're what the tools naturally measure.

Your task is to look past what's easy to measure and focus on what matters.

Measuring campaign effectiveness

A campaign is a specific initiative aimed at a specific outcome. How do you know if it worked?

Start with a baseline

You can't measure effectiveness without knowing what would have happened anyway. If sales go up 10% after launching a campaign, did the campaign do that, or would they have gone up anyway?

The gold standard is a randomised controlled test. Split your audience into two groups: one that sees your campaign, one that doesn't. Measure the difference in outcomes. This is expensive and not always practical, but it's the cleanest way to isolate marketing impact.

More practically, use historical comparisons. Compare the same period in previous years, adjusted for known changes in business conditions. Track weekly trends before and after the campaign launch. Look for a sharp change at the point the campaign went live.

Measure pre and post

Don't just measure what happens during the campaign. Measure what happens before and after.

Some marketing effects carry over. A brand awareness campaign might not drive immediate sales, but it lowers the friction on sales conversations for months afterwards. An email campaign might drive purchases immediately, but also increases repeat purchase rates in the following period.

A campaign that looks modestly effective during the promotion might look significantly more effective when you measure the full carry-over effect.

Account for multiple touchpoints

Most customer journeys involve multiple marketing touches across multiple channels. The customer sees a social media ad, reads a blog post, gets an email, then buys.

Which channel gets credit? That's the attribution problem. Last-click attribution gives all credit to the last touchpoint. First-click attribution gives all credit to the first. Neither is accurate.

For campaign effectiveness measurement, the solution is simpler: measure the campaign as a system, not individual channels. Ask: did customers who were exposed to this campaign across all its channels buy more than customers who weren't?

This is where marketing mix modelling becomes valuable. When you have multiple campaigns running simultaneously across multiple channels, it becomes nearly impossible to isolate the impact of any single campaign using traditional methods. MMM separates the effect of each component, allowing you to measure effectiveness accurately even in complex marketing environments.

Measuring effectiveness by channel

Each channel has different characteristics. How you measure effectiveness depends on what you're measuring.

Email marketing effectiveness

Start with conversion rates and revenue per recipient. Not open rates or click-through rates; those are engagement metrics. What matters: did the email lead to a sale?

Track customer lifetime value of people acquired via email against people acquired through other channels. Email-acquired customers often have higher lifetime value because they're pre-qualified and actively engaging with your brand.

For retention emails, measure repeat purchase rate and frequency. Does sending regular emails increase how often customers buy? Segment by customer cohort to see if older customers have higher retention rates if they receive emails.

Social media marketing effectiveness

This is where most companies go wrong. They measure engagement and call it effectiveness.

Instead: track the percentage of your audience that sees your content and then takes a desired action (click, enquiry, purchase). Use platform conversion tracking (Meta Conversions API, LinkedIn conversion tracking) to measure actual outcomes, not clicks.

Test different audience segments (by geography, demographics, interests) and measure which segments have the highest conversion rates and customer lifetime value. A segment with lower engagement but higher-quality customers is more valuable than a highly engaged low-quality segment.

For brand awareness campaigns, use brand lift studies. Survey a sample of people who were exposed to your social content and a control group who wasn't. Measure the difference in brand awareness, consideration, and preference.

Billboard and out-of-home effectiveness

OOH is often dismissed as impossible to measure. This is wrong. It's just less obvious.

Use frequency and impression data from your media planner as a starting point. Then measure changes in relevant metrics during the campaign period: brand search volume, website visits, store visits (if you're running OOH for a physical location), or direct response (a promo code visible only on the billboard).

Compare the costs of those outcomes to other channels. An OOH campaign that drives 10,000 website visits for £50,000 is far less effective than one that drives the same visits for £20,000. This isn't obvious without measurement.

Run OOH in some areas but not others (if possible). Measure whether results in areas with heavy OOH presence differ significantly from areas without. Isolate the OOH impact.

How UK companies measure this

Theory is useful. What do companies that get this right do?

Tesco measures marketing effectiveness against specific sales metrics by category, geography, and customer segment. They treat marketing as an investment that should deliver measurable return, not as a cost. Every campaign has defined success criteria tied to volume, revenue, or customer acquisition targets.

Unilever uses econometric modelling across their portfolio to understand the impact of spending changes in each channel on sales and profit. They've moved away from last-click attribution to a statistical approach that accounts for all the interactions between channels.

John Lewis tracks online and offline outcomes for integrated campaigns. They don't assume that a digital campaign only drives digital sales. They measure whether online advertising drives in-store visits, and whether in-store promotions increase online engagement.

Ocado focuses relentlessly on customer acquisition cost relative to customer lifetime value. Every marketing initiative is evaluated on whether it's bringing in customers who generate more value over time than the marketing cost.

The pattern: all of these companies define clear success metrics at the outset, measure outcomes against business results (not engagement metrics), and adjust their marketing allocation based on what's working.

Connecting marketing spend to outcomes

Here's the persistent challenge. You can measure the effectiveness of an individual campaign, but what about the cumulative effect of all your marketing across all channels?

If you increase email spend, search spend, and social spend all simultaneously, which one drove the revenue increase?

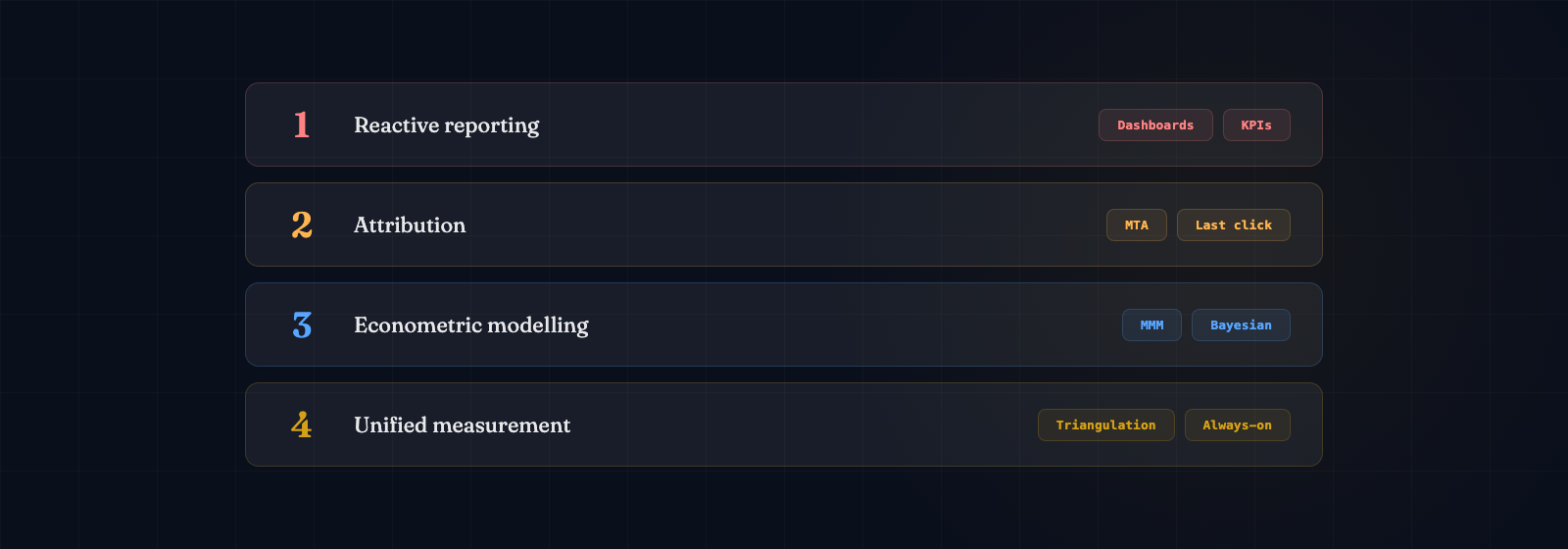

This is where simple attribution breaks down. If ten customers see email, social, search, and display ads before purchasing, how do you allocate the credit?

There are a few practical approaches:

Time-decay attribution gives more weight to touchpoints closer to the purchase. This reflects the reality that the final touchpoint often has more influence. But it still requires choosing weights arbitrarily.

Incrementality testing runs tests where you reduce spend in a channel and measure whether outcomes decline proportionally. The disadvantage: it only works for channels where you're willing to reduce spending, and the results can be noisy.

Marketing mix modelling uses statistical methods to separate the contribution of each channel to total sales, accounting for seasonality, competitive activity, pricing, and other business factors. It's the most reliable method for understanding true marketing effectiveness when multiple factors are in play.

Automated data preparation tools are helping companies move past traditional attribution faster. Instead of debating which last-click metric to trust, you can model the actual contribution of each channel to sales, week by week, and optimise your budget accordingly.

Getting started with effectiveness measurement

You don't need perfect measurement to start. Start with one campaign and one outcome metric.

Define success upfront. Before you run a campaign, define what effectiveness looks like. Not a feeling. A number. "This campaign is effective if we acquire 500 customers with a lifetime value of more than £2,000."

Identify your baseline. What would have happened if you hadn't run the campaign? Use historical data or a control group if possible.

Measure the outcome, not the output. Not impressions delivered or clicks generated. Revenue. Customers. Orders. Repeat purchases. The outcome you care about.

Account for the full period. Some effects are immediate. Some take months to materialise. Measure long enough to capture the full effect.

Calculate ROI. Total outcome value divided by total spend. Express it as a ratio or percentage. This becomes your benchmark. Are you beating it with your next campaign?

Segment results. The overall campaign effectiveness number hides important variation. What worked with the 25-34 age group might not have worked with 35-44. What worked with existing customers might not have worked with new prospects. Dig in.

Feed insights back into planning. The point of measurement isn't reporting. It's learning. Use what you learn from one campaign to improve the next.

Start small. One campaign. One metric. One month. Then build from there.