The bottleneck was never the model

Open-source MMM frameworks like Meta's Robyn and Google's Meridian are free and solid. The modelling algorithms aren't your constraint. What costs money and time is everything that happens before the model runs: getting data into shape.

60-80% of a typical MMM project timeline is data preparation. This is why traditional engagements take 3-6 months. It's not because the maths is slow. It's because your data lives in 10+ places.

Your spend data sits in Adwords. Your conversion data is in Google Analytics. Your offline spend is in spreadsheets in marketing ops. Channel costs come from agency invoices. Creative performance is in your ad platform. Promotional activity is trapped in someone's spreadsheet. Media planning numbers are different from actuals. Some channels have daily data. Others are monthly. Some data is missing entirely for specific periods.

This is the work. Someone has to chase each source. Reformat mismatched columns. Reconcile granularity differences. Fill in missing days with interpolation or estimates. Rename fields so they match across systems. Validate that £50k spend figure means what you think it means. Check for duplicates. Handle currency conversions. Create a single clean file that a model can use.

Most of that time isn't spent thinking. It's spent extracting, copying, reformatting, and manually checking things. Exactly the kind of work machines can do.

What AI does in this process

This is where you need to be specific. AI doesn't magic your data into perfection. It does four specific things that save time and reduce manual effort.

Pattern recognition for column mapping. You have 47 columns across five different sources. AI learns which ones are the same thing with different names. Spend column in one system. Expenditure in another. Amount spent in a third. The model identifies these matches and suggests them to you. You review and approve. No more manual hunting through headers.

Automated format detection. Your agency sends data as YYYYMMDD. Your platform exports as DD/MM/YYYY. Your spreadsheet has 1-Jan-2024. The system identifies each format, parses correctly, and outputs a single consistent timestamp. Same logic for currencies, decimal separators, and numeric formats. It doesn't guess. It detects patterns, suggests the format, and you confirm.

Granularity mismatch detection. You have daily spend from Google Ads but only weekly data from your CRM. Monthly budget actuals from Finance. The system identifies these mismatches, flags them, and can either aggregate the fine-grained data down or note where interpolation was needed. Transparency, not hidden assumptions.

Anomaly detection for data quality. That one day in July where spend shows as 100x normal. That channel showing negative conversions. Missing data points in the middle of a time series. The system surfaces these anomalies so you can decide: is this real? Is it an error? Does it need investigation or correction?

None of this is magic. It's pattern matching plus human review at every stage. The output is model-ready data with a full audit trail showing what was changed and why. You keep control. You make the final call on anything that matters.

What this means for speed and cost

The compression is real.

Traditional timelines: 3-6 months from kickoff to results. Most of that is in data prep. Project cost: £80k-£150k per brand per market. 60-80% of that is labour on data work.

AI-assisted timelines: 3-6 weeks from kickoff to results. The modelling and analysis phases don't change. The data prep phase collapses from 8-12 weeks to 1-2 weeks because you're not manually reformatting hundreds of rows. Project cost: £15k-£30k per brand per market.

This changes the economics. At £100k per model, you run MMM once every two years if you're lucky. At £20k per model, you refresh quarterly. At £20k, a multi-market rollout becomes viable instead of impossible. At £20k, mid-market brands can afford it.

Cost isn't the only benefit. Speed means your results land in a planning window where you can act on them. Not six months later when the campaign is over.

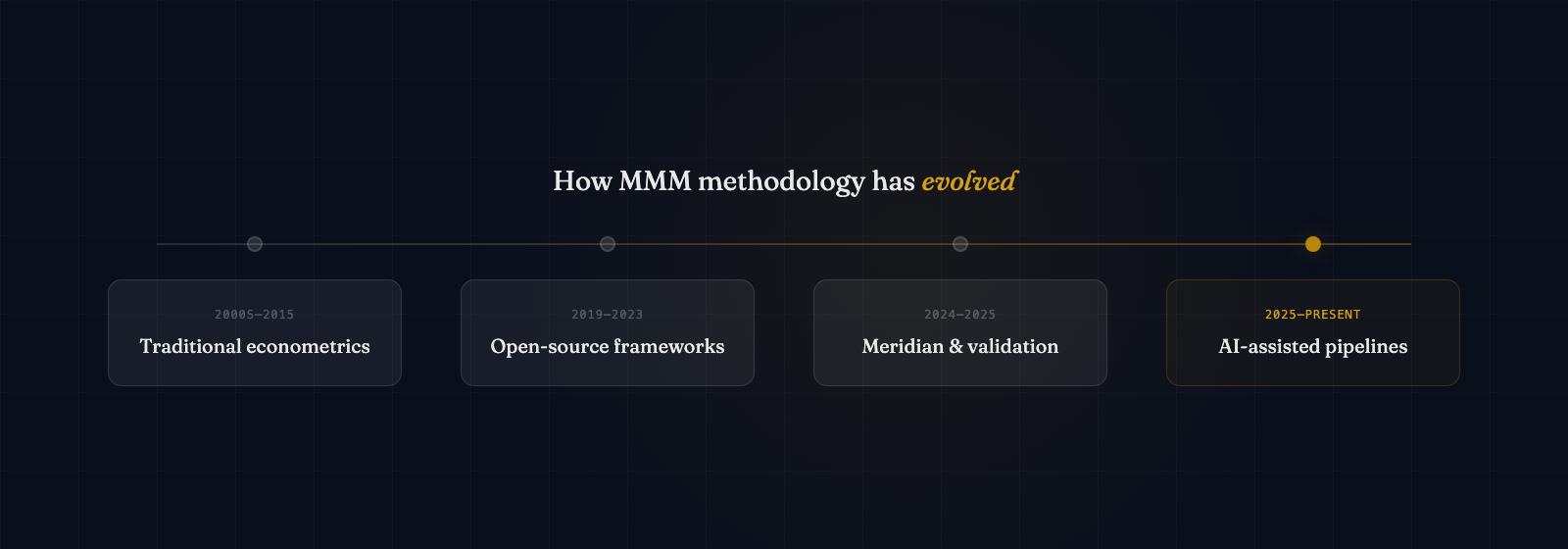

MMM delivery timelines are compressing as automation and AI remove data prep bottlenecks

AI automation replaces weeks of manual work with intelligent, auditable processes

How our pipeline works

We built our data pipeline specifically for this problem. It automates the data preparation layer for MMM.

You send your data in whatever format it exists in your organisation. Raw spend dumps from your platforms. Spreadsheets from agencies. Database exports from your analytics tool. Mismatched columns, different granularities, all of it. The pipeline cleans the data, maps columns automatically, detects and flags issues, validates consistency, and produces a model-ready output file.

Every transformation is logged. You can see exactly what changed, why it changed, and when. You review and approve before the data goes into your MMM. You maintain a complete audit trail for stakeholder sign-off. Human review happens at the stages that matter: confirming column mappings, signing off on anomalies, validating final outputs.

The goal is simple: compress data preparation from weeks to days. Keep quality high. Keep you in control. Make MMM fast enough and cheap enough that you can use it regularly instead of as a one-off exercise.

What this doesn't change

Be clear about what AI doesn't do here. It doesn't replace the need for good source data. If your analytics implementation is wrong, automated tools clean wrong data. Garbage in, garbage out still applies. You still need to validate that your source systems are tracking what you think they're tracking.

It doesn't replace experienced interpretation. The model output shows you correlations and elasticities. An analyst still needs to decide whether a result is real or an artefact. Whether a spend coefficient makes sense given what you know about that channel. Whether a recommendation is actionable or statistically significant but not practically useful. AI speeds up the mechanical work. It doesn't replace judgement.

It doesn't replace good MMM practice. You still need sufficient historical data. Your channels need enough variance to estimate. Your data needs to cover a period long enough to capture seasonal patterns. Confounding variables still matter. Causal inference is still hard. All the fundamental constraints of MMM remain.

What changes is only the bottleneck. Data preparation was the thing slowing you down. That part gets faster and cheaper. Everything else stays the same.