The types of providers you'll encounter

Start by understanding the ecosystem. There are roughly four types of MMM providers, each with different incentives and capabilities.

The big consultancies (Deloitte, McKinsey, Bain, EY, etc.) have deep expertise and resources. They also have overhead. A McKinsey engagement costs six figures minimum because you're paying for senior talent and their firm's infrastructure. The work is usually solid. The risk: they'll over-engineer the solution and the model will collect dust on a shelf after the engagement ends. They also build models as a service delivery, not as a repeatable product. You'll get a great report. You won't get ongoing support.

Specialist MMM firms (Adverity, Measured, Location Sciences, and smaller boutiques) focus exclusively on measurement. They typically charge less than the big firms because they have lower overhead. They specialize in specific scenarios (ecommerce, DTC, retail, etc.). The risk: some are better at technology than strategy. You might get a well-built model in a slick interface but limited business guidance on what to do with it.

Marketing analytics platforms (Convertro, Rockerbox, others) bolt MMM onto a broader analytics system. These tools let you combine MMM with attribution, analytics, and reporting in one interface. The appeal is integration. The risk: MMM is often secondary to their core product, so modeling capability lags behind dedicated firms. They're strong on ease of use, weaker on methodological sophistication.

In-house builds with open-source frameworks (using PyMC3, Stan, or TensorFlow) mean hiring data scientists and building your own model. This is cheapest at the margin but requires real expertise to execute and maintain. The risk: data science talent is expensive, and MMM is a specific enough domain that general data scientists need ramp time. You'll get a cheap solution if you have the talent. Most companies don't.

MMM provider selection framework based on cost, timeline, and capability requirements

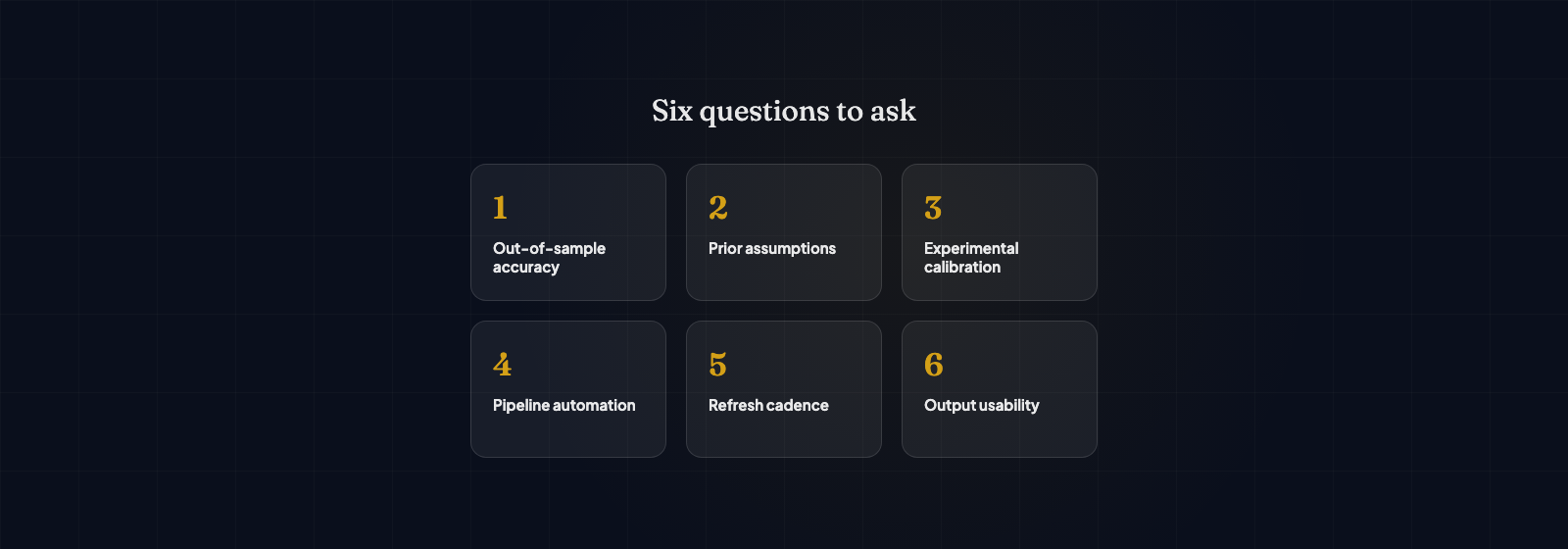

Critical questions to ask any provider

Before you compare vendors, ask these questions. They'll reveal whether a provider knows what they're doing.

How do you handle multicollinearity? This is the technical term for when your channels are correlated—spending on Google Ads and Meta Ads both spike in January, for example. When variables are correlated, the model can't easily assign credit. A good provider will explain their approach explicitly: regularization, priors, domain assumptions, etc. A bad provider will either dodge the question or tell you their black-box algorithm "handles it." Walk away from that conversation.

What happens when my channels aren't independent? Real businesses have dependent channels. Your email audience came from your paid ads. Your retargeting campaign targets people who visited from organic. If your provider says "that's not a problem for our model," they're not being honest. The honest answer is: "That's a real challenge. Here's how we account for it, and here are the limitations you should understand."

How do you validate the model? Ask them how they confirm their results are realistic. Do they test predictions on held-out data? Do they compare model results to incrementality tests you've run? Do they sanity-check coefficients against industry benchmarks? A provider who says "the model fits the data well" is giving you one dimension of validation. That's not enough. You need external validation—testing whether the model's predictions would hold in new data.

What's your typical project timeline and budget? A full MMM from data audit through model delivery typically takes 12-16 weeks and costs $40-150k depending on data complexity and vendor. If a vendor quotes you $20k in two weeks, they're not doing the work you need. If they quote $500k, they might be over-scoping. Reasonable timelines and budgets exist. Understand what they are before you compare quotes.

Who owns the model after delivery? Some vendors deliver you a report and a spreadsheet. You own it but you can't update it without calling them. Others deliver documented code and you own it completely. Others keep it in their system and you access it through their interface. Each model has pros and cons. Know which one you're buying. If you want flexibility later, you need to own the model. If you want simplicity, outsource it.

How do you handle new channels or major external shocks? COVID, iOS privacy changes, platform algorithm updates—things happen that your historical data didn't predict. Does the provider have a process for retraining the model? Do they manually adjust for known shocks? Do they have a mechanism for incorporating new channels? If they tell you "the model just adapts," that's not a real answer. Models are historical. External shocks require manual intervention.

Red flag: a vendor who promises they can explain exactly why your revenue was $X last week. MMM is probabilistic, not deterministic. It gives you ranges and confidence intervals. If someone promises point predictions on what already happened, they're either overselling or they're building something too flexible (which will overfit and fail on new data).

Red flags to watch for

Black-box models that can't be explained. You should understand the basic structure of how your model works. Not the math—the logic. Which channels does it measure? How does it handle promotions? What does it assume about seasonality? If the provider won't explain this at a business level, walk away. Unexplainable models are often overfit or they hide shortcuts that make them useless.

No discussion of uncertainty. A provider who says "Email drives 8% of revenue" is being misleading if they don't also say "with a 90% confidence interval of 5-11%." All models have uncertainty. If they don't mention it, they're not being honest about limitations. Statistical rigor requires stating what you don't know.

Guarantees about accuracy. "Our model is 95% accurate" means nothing without context. Accurate at what? Predicting daily revenue? Monthly revenue? Average channel contribution? Vendor claims about accuracy without qualification are a red flag.

High-pressure sales around timeline. "We can deliver in four weeks" might be true for small projects. It's suspicious for complex ones. A vendor pressuring you to decide quickly often has low utilization and wants to slot you into a schedule. Take time. Good vendors aren't desperate for immediate decisions.

No experience with your business type. Don't hire a consultant who specializes in fast-moving consumer goods to model your B2B SaaS business. Different business models have fundamentally different dynamics. Ask for case studies in your industry. If they don't have any, they're taking a risk (and so are you).

Reluctance to explain methodology. "That's proprietary" or "our secret sauce" might apply to specific assumptions, but the general methodology shouldn't be proprietary. If a vendor won't explain whether they're using Bayesian or frequentist approaches, how they handle seasonality, or what priors they use, be skeptical. Legitimate methodology is usually publicly documented in academic papers or vendor documentation.

What a good proposal looks like

When comparing proposals, look for these elements: A clear scope of what data you'll provide and what the vendor will validate. Realistic timeline with defined phases (data audit, exploration, modeling, validation, delivery). Transparent budget that breaks out costs by component, not a lump sum. Methodology section that explains the approach in business terms, not just math. Success criteria defined upfront. What does good look like? How will we know if the model is working? Transition or handoff plan. How will you support us after delivery, or how will we maintain it ourselves?

A vague proposal that says "we'll build an MMM using advanced statistical techniques" is not a good proposal. A good proposal is specific about what you'll get, when you'll get it, and how much it costs.

Cost benchmarks and ROI

Full-build MMM projects typically range $40-150k depending on complexity. A smaller budget MMM at your company might run $25-40k. Ongoing support and refreshes typically run 20-30% of the build cost annually. Cloud platform MMM tools (Measured, Adverity) range $5k-30k annually depending on features and data volume.

Is it worth it? If an MMM project costs $80k and it improves your media mix efficiency by 5%, and you're spending $5M annually on media, that's a potential $250k benefit in the first year. The math works if you use the model to optimize. If you build it and ignore it, the ROI is negative.

The real question isn't "how much does MMM cost" but "what will we do differently if we have a model?" If you don't have a clear answer, wait. Build the model only when you have identified a specific decision you'll make with it.

Trade-offs across MMM provider types: cost vs speed vs customisation vs support

Making the final decision

You're choosing a partner for 12-16 weeks minimum, often longer. Talk to their references. Ask specifically: Did the vendor deliver what they promised? Do you use the model actively? Would you hire them again? Did they disappear after delivery or do they offer ongoing support? These conversations matter more than case studies.

Evaluate three vendors minimum. Get detailed proposals from each. Ask the same critical questions. The goal isn't to find the cheapest option. It's to find a vendor who understands your business, is transparent about limitations, and will stay engaged after delivery. If you're still not sure whether MMM is right for you, that's worth clarifying before you hire anyone. A good vendor will help you think that through. A mediocre one will just sell you a project.