Marketing Mix Modelling is having a moment. Half of marketers worldwide now use some form of it, and 67% of marketing leaders plan to increase their investment over the next two years (Gartner, 2024). But adoption is running ahead of execution. 75% of marketers say their current measurement approaches aren't delivering the speed, accuracy, or trust they need (IAB/BWG, State of Data 2026).

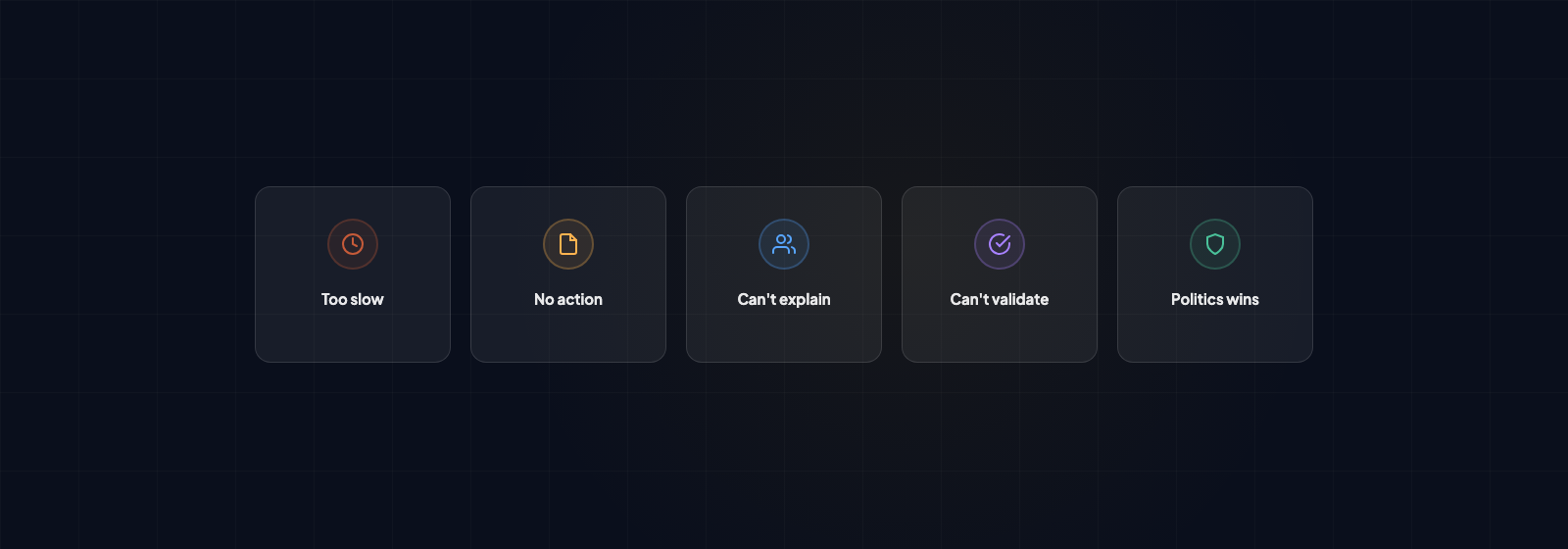

It takes so long that the results are out of date before anyone sees them

A typical agency-led MMM project takes three to six months to deliver results (Keen Decision Systems, 2025). In complex enterprise deployments, it can stretch to twelve. By the time insights land, the budget has been committed, the agency has been briefed, and the planning window has closed.

The reason it takes this long is almost never the modelling. The VP of Measurement at Funnel put it bluntly: "The model is only 20% of the work. The other 80% is consolidating and transforming data." Circana's research confirms the range: data preparation consumes 30% of project time for organisations with strong data infrastructure, and 70% or more for those without it.

That 70% figure is where most brands sit. Spend data is scattered across platforms. Agencies report on different cycles. Naming conventions change quarterly. Someone always needs "one more week" to reconcile their numbers.

"Projects only move as fast as the slowest piece of data."

CircanaThe modelling itself is computationally fast. Meta's Robyn can test thousands of model combinations per minute. Google's Meridian launched a no-code Scenario Planner in February 2026. The bottleneck is upstream: getting clean, consistently structured data into the model in the first place.

OptiMine's research found that with an annual refresh cadence, models can be off by 50 to 100%, and "in some cases those models can do more harm than good." That's not a minor drift. That's a tool actively misleading the people who rely on it.

Ask any measurement partner what percentage of the project timeline is data preparation, and how much of it they have automated. If the answer is "we handle it manually each time," the project will take months regardless of what they promise. The data pipeline is the constraint, not the statistics.

The results don't change any decisions

Only 20% of analytic insights deliver business outcomes (Gartner, 2019). Only 3% of practitioners at the ANA Media Conference said their measurement solution "does everything I need it to do and more" (AdExchanger, 2025). And only 21% of marketing leaders say they receive actionable insights in real time, down from 26% two years ago (NielsenIQ, 2024). The situation is getting worse, not better.

The reason most MMM results get filed rather than acted on is structural. Traditional engagements are designed around annual or semi-annual refresh cycles: a single study, once or twice a year, producing a single set of recommendations. But marketing teams don't make decisions once a year. They make them monthly, sometimes weekly.

"CMOs hate that they spend 6 months waiting on their MMM results and then another 6 months trying to explain why the MMM results don't drive the promised returns when put into practice."

Recast's founder, from CMO interviewsThe output format compounds the problem. Most MMM deliverables are designed to be defensible rather than usable. A 40-page methodology deck with decomposition charts looks impressive in a steering committee. But the person who needs to act on it — the media planner deciding whether to increase spend in Q3 — needs something quite different. They need to know what happens if they move 15% from TV to paid social.

When the CFO asks "What would happen if we cut our marketing budget by 10%?", the answer from legacy MMM is what AdExchanger calls the six fatal words: "Let me get back to you."

Before the project starts, identify the specific budget question that is live right now. What would change your mind? What decision needs to be made in the next quarter? Build the output backwards from that question. A two-page scenario analysis that directly informs a decision your team is already facing is worth more than a comprehensive study that sits in a shared drive.

Need MMM that delivers results you can actually use?

We build outputs around your live budget questions, not methodology decks.

Book a 15-minute call →Nobody in the room can explain the results to the people who control the budget

Only 49% of senior marketing and finance leaders can clearly explain their marketing measurement approach to the board (Haus Decision Confidence Index, March 2026). And 74% report abandoning or scaling back a marketing initiative because they lacked confidence in how to measure its impact.

The disconnect runs deeper than presentation skills. McKinsey's 2025 CMO research found that 70% of CEOs measure marketing's impact based on year-over-year revenue growth and margin, but only 35% of CMOs track those as their top metrics. They are not speaking the same language.

Nearly 70% of CEOs and CFOs told Gartner they felt their CMO had "failed to deliver promised results from marketing strategy." Only 22% of CFOs said their CMO had been clear about what marketing was even responsible for.

"We all study behavioural economics for our own ways to nudge consumers along the path to purchase. We've got to do the same with our CFO, our CEO, the C-suite."

Pam Forbus, former CMO, Pernod Ricard North AmericaThis creates a doom loop. CMOs are under more pressure than ever to prove value: pressure from CFOs is up 21%, from CEOs 20%, and from boards 52% since 2023 (CMO Survey, Spring 2025). But the measurement frameworks they rely on — including MMM — produce outputs designed for data scientists. Adstock decay rates, Variance Inflation Factors, and posterior predictive checks are meaningless to a CFO who wants to know whether the marketing budget is contributing to revenue growth or burning cash.

Produce two versions of every MMM output. The technical document covers model diagnostics and specification choices for anyone who wants to interrogate the methodology. A separate planning document answers, in plain language: what should we do differently, what is the expected impact, and what does it mean for the budget? That document should not contain a single reference to R-squared or Adstock. It should contain numbers, a recommendation, and a clear link to the decision it informs. And get finance involved in defining how ROI is calculated before the project starts, not after.

You have no way of knowing whether the model is any good

This is the most technically important problem on this list, and the one most brands are least equipped to evaluate.

Most brands receive a model accompanied by a high R-squared value — the classic measure of how well the model fits historical data. An R-squared of 0.92 looks reassuring. But as Recast's research puts it: "You can have a terrible model that has a really high R-squared, and you can have a great model that has a really low R-squared."

"Many modellers openly admit they're surprised at how rarely their findings are challenged, and how seldom clients ask to see the battery of statistical tests that would reveal how much the results can really be trusted."

Lindsay Rapacchi, Bauer Media, in Marketing WeekThe published industry benchmarks are worth knowing. Meta's Robyn framework flags R-squared below 0.8 as "not ideal" and above 0.9 as "ideal", but Stella's validation guide flags anything above 0.95 as suspicious for overfitting. For MAPE (Mean Absolute Percentage Error), industry experts consider 5 to 15% reasonable for well-specified models (Funnel.io). Out-of-sample accuracy, where the model predicts a period it hasn't been trained on, should be within 10 to 20% (MarketingIQ, Stella).

But here's the statistic that matters most: uncalibrated MMM models show a 25% average difference from ground truth ROAS (Analytic Edge, commissioned around Meta's Robyn, 2024). That means the typical model, without experimental validation, is out by a quarter on its core output. You are making budget decisions based on numbers that are systematically 25% wrong, and you have no way of knowing in which direction.

The strongest validation is calibration against real-world experiments: running a geo holdout test (pausing advertising in a subset of regions and measuring the difference) to compare the model's predictions against what happened in the real world. Meta's HBR-published research (2023, based on 18 studies with advertisers) describes this as the emerging gold standard. The IAB's 2025 best practices guide now recommends requiring "at least two supporting signals" for any material budget decision.

Ask three questions of any measurement partner. Can you show me the out-of-sample accuracy on a holdout period? What prior assumptions did you set before the model ran, and why? Have you calibrated against any experimental results? If the answer to all three is no, you are being asked to trust a model on the basis of how well it fits data it was specifically built to fit.

The results are politically inconvenient, so nothing changes

This is the most common reason MMM findings are ignored, and the least often discussed.

The numbers are stark. 91% of organisations cite cultural challenges and change management — not technology — as the principal barrier to becoming data-driven. That figure has remained stable for five years running (NewVantage Partners, 2025). Only 24% of organisations describe themselves as data-driven (Forbes/NewVantage, 2023).

A Gartner survey of 377 marketing analytics professionals found that one-third of decision-makers cherry-pick data that supports a decision they have already formed, 26% don't review the data at all, and 24% go with their gut.

The sunk cost research explains why. Arkes and Blumer's foundational study found that 85% of decision-makers chose to continue a failing project when prior investment was mentioned, versus 10% when it wasn't. Kahneman and Tversky established that the pain of losing is approximately twice as powerful as the pleasure of an equivalent gain. Confirmation bias has the strongest negative impact on marketing decision quality of any cognitive bias studied. The person who championed the underperforming channel is psychologically the least likely to accept evidence that it is underperforming.

"The person running the model also purchased the TV media and conveniently made TV look like the hero."

Stella's MMM GuideThe counter-example is instructive. Uber's analytics team suspected Meta rider-acquisition ads were non-incremental. An MMM flagged it. A three-month incrementality test (Meta ads turned off, no drop in riders) confirmed it. Uber reallocated $35 million annually to higher-ROI channels. This worked because the test was designed before the result was known, the decision criteria were agreed in advance, and the experiment was run by a team without a stake in the outcome.

Frame MMM as a decision tool before the project starts, not a verdict after it finishes. Get your key stakeholders — marketing, finance, commercial — to agree upfront on what they would be willing to change their minds about. Make it specific: if the model shows TV is delivering half the ROI of paid social, what would we do? If display has hit diminishing returns, what is the process for reallocating? When those questions are agreed in advance, the results become a shared input rather than a threat. The champion of the underperforming channel helped define the question. It is much harder to dismiss an answer to a question you helped set.

So when does MMM work?

The brands that extract real value from MMM share three things: the data pipeline is automated so results arrive while decisions are still live, the output is designed for the people making budget decisions rather than the people building models, and the organisation has agreed in advance what it would change based on the findings.

MMM works best when you are spending meaningfully across multiple channels and you don't have a way to measure what is driving growth. As a rough guide, brands spending under £500k on media typically find the cost of measurement outweighs the insight.

If you are trying to work out whether MMM makes sense for your business, we're happy to have an honest conversation about it — including telling you if it doesn't.